|

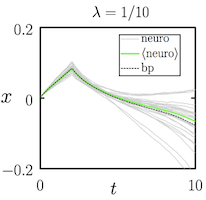

Correspondence between neuroevolution and gradient descent

S. Whitelam, V. Selin, S.-W. Park, I. Tamblyn Nature Communications, 12, 6317 (2021) Neuroevolution and gradient descent are two fundamentally different approaches to training neural networks, yet the relationship between them has remained unclear. In this work, we show analytically that training a neural network by conditioned stochastic mutation of its weights -- a form of neuroevolution -- is equivalent, in the limit of small mutations, to gradient descent on the loss function in the presence of Gaussian white noise. Averaged over independent realizations of the learning process, neuroevolution is shown to be equivalent to gradient descent on the loss function. We use numerical simulations to demonstrate that this correspondence can be observed for finite mutations, for both shallow and deep neural networks. These results provide a rigorous mathematical connection between two families of neural-network training methods that are usually considered to be fundamentally different, opening the door to cross-pollination of ideas and techniques between the evolutionary computing and deep learning communities. |