|

Training neural networks using Metropolis Monte Carlo and an adaptive variant

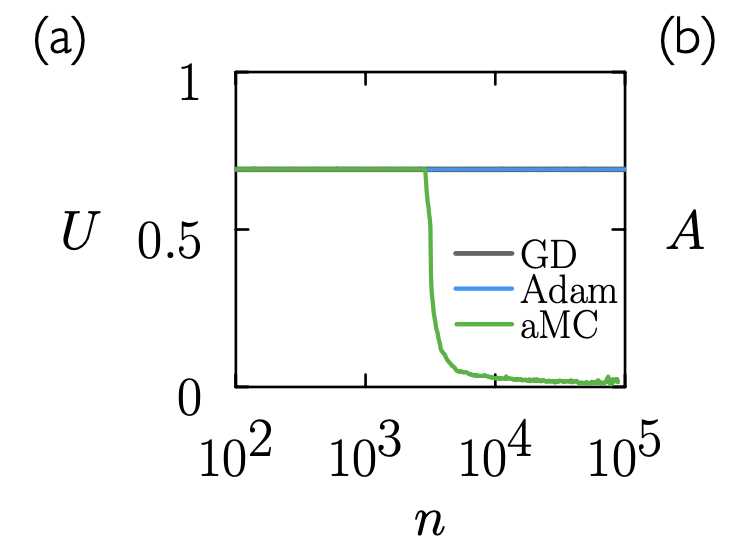

S. Whitelam, V. Selin, I. Benlolo, C. Casert, I. Tamblyn Machine Learning: Science and Technology, 3, 4, 045026 (2022) Gradient descent and its variants are the dominant methods for training neural networks, but they can struggle with vanishing or exploding gradients in deep and recurrent architectures. In this work, we explore zero-temperature Metropolis Monte Carlo as an alternative optimization strategy for training neural networks. We show that standard Monte Carlo can train networks with accuracy comparable to gradient descent, though not necessarily as quickly. However, the method can fail when network structures or neuron activations are strongly heterogeneous. To address this, we introduce an adaptive Monte Carlo algorithm (aMC) that automatically adjusts proposal step sizes to account for such heterogeneity. The intrinsic stochasticity and numerical stability of the Monte Carlo approach allow aMC to successfully train deep neural networks and recurrent neural networks in regimes where gradients are too small or too large for gradient-based methods to succeed, offering a complementary tool for neural network optimization. |