|

Time and temporal abstraction in continual learning: tradeoffs, analogies and regret in an active measuring setting

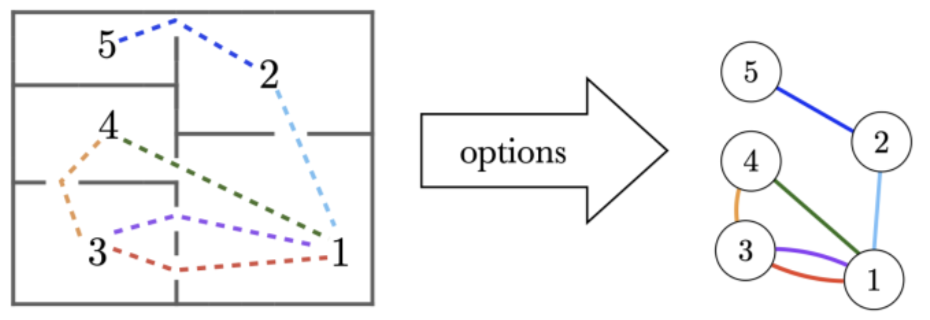

V. Letourneau, C. Bellinger, I. Tamblyn, Maia Fraser 2nd Conference on Lifelong Learning Agents (CoLLAs) (2023) Continual learning agents must balance the cost of acquiring information against the value it provides for decision-making, yet the role of temporal abstraction in this tradeoff remains underexplored. In this work, we establish a direct analogy between intermediate representations in semi-supervised learning (SSL) and temporal abstraction in hierarchical reinforcement learning (HRL), showing how factorization and partial information (labeling in SSL, observation in RL) play crucial roles in both settings. Focusing on a specific class of partially observed Markov decision processes (POMDPs) centered on active measuring -- where the agent must decide when to pay for costly state observations -- we leverage theoretical tools from SSL to derive regret lower bounds that apply universally to any RL algorithm operating without temporal abstraction in this restricted problem class. These results provide theoretical grounding for understanding when temporal abstraction mechanisms become necessary for effective lifelong learning, with implications for industrial control applications. |